MLflow gives you the eval library. Production needs the platform.

Author:

The LayerLens Team

Last updated:

Published:

Author Bio

Jake Meany is a digital marketing leader who has built and scaled marketing programs across B2B, Web3, and emerging tech. He holds an M.S. in Digital Social Media from USC Annenberg and leads marketing at LayerLens.

TL;DR

MLflow is a strong open-source eval library, but production teams end up building 10 critical infrastructure primitives on top of it.

Multi-tenancy (GitHub issue #5844, open since 2022), audit attestation, and replay are the three highest-cost gaps in the library path.

The weighted 10-criterion scorecard: MLflow 2.0/10 vs. Stratix 9.7/10 on production readiness (not library quality).

The real cost is engineering headcount diverted from product work to evaluation infrastructure maintenance.

The choice is not "which tool is better" but "does your org want to be in the business of building eval infrastructure."

Introduction

MLflow is genuinely good software. The eval library (mlflow.genai.evaluate) works. The tracking server is solid. The trace inspector added in v3.11.1 is useful. The open-source community behind it is real.

This is not an attack piece on MLflow.

It is a description of what happens when a team running AI agents in production makes a decision they thought was architectural but turned out to be organizational: choosing a library instead of a platform. The distinction matters. It matters in a way that shows up in your engineering headcount, not your benchmark scores.

The library is not the platform

A library is a set of functions you call. A platform is the set of services that surround those functions at production scale.

MLflow gives you mlflow.genai.evaluate. You call it. Your trace gets logged. Your judge score comes back. That part works exactly as advertised.

What MLflow does not give you is what every production team ends up building themselves, usually over the following three to twelve months, usually while someone senior is asking why the AI quality program is burning engineering cycles faster than it is producing signal.

The ten primitives every production eval team builds on top of their library

1. Multi-tenancy

MLflow GitHub issue #5844 has been open since 2022. The ask: native multi-tenant support. The current status: no maintainer commitment, no roadmap entry, no workaround beyond "run separate MLflow servers per tenant." Four years.

That is not a gap waiting to be filled in the next quarterly release. It is an architectural choice the maintainers made. MLflow is designed as a single-tenant tool. Running it multi-tenant means you are running multiple MLflow instances, with all the ops burden that implies, or building row-level security yourself on top of a data model that was not designed for it.

For a team running one internal AI model, this is fine. For a team running AI across multiple business units, multiple customers, or multiple environments that require data isolation, this is months of infrastructure work that produces no product differentiation.

Stratix ships with native multi-tenant primitives: row-level security at the persistence layer, per-tenant queues, per-tenant rate-limit buckets, per-tenant cost rollup, per-tenant audit log. The isolation is not something you configure. It is how the system was designed.

2. Audit chain and attestation

Enterprise compliance reviewers do not accept "the eval said so." They ask: who ran the eval, on which data, against which version of the judge, and can you prove that record has not been modified since it was created?

MLflow logs are mutable. You can overwrite a run. There is no cryptographic anchor on the evaluation record. That is fine for internal experimentation. It is not fine for regulated industries where the evaluation record is part of a compliance artifact.

Stratix generates a hash-chained signed audit trail per evaluation. Every numeric claim carries a provenance triple: source, evidence URL, replication protocol URL. The attestation chain means a compliance reviewer can verify the record without trusting the team that produced it.

3. Replay engine

A production AI system fails in production. Your team needs to understand what happened. The agent returned a hallucinated answer. The question is: was this a model failure, a prompt failure, or a data failure?

Without replay, you are reading logs and guessing. With replay, you re-run the exact trace against the same or a different model snapshot and see whether the failure reproduces.

MLflow does not have a replay engine. It has trace storage. The replay logic, the counterfactual prompt testing, the diff between original and replayed trace: your team builds that, or the answer to "why did this fail in production" stays "we are not sure."

Stratix has 13 modules under stratix/replay/. The replay is bit-exact against stored model snapshots. Cryptographic anchors on every replay artifact so the result is verifiable, not just informative.

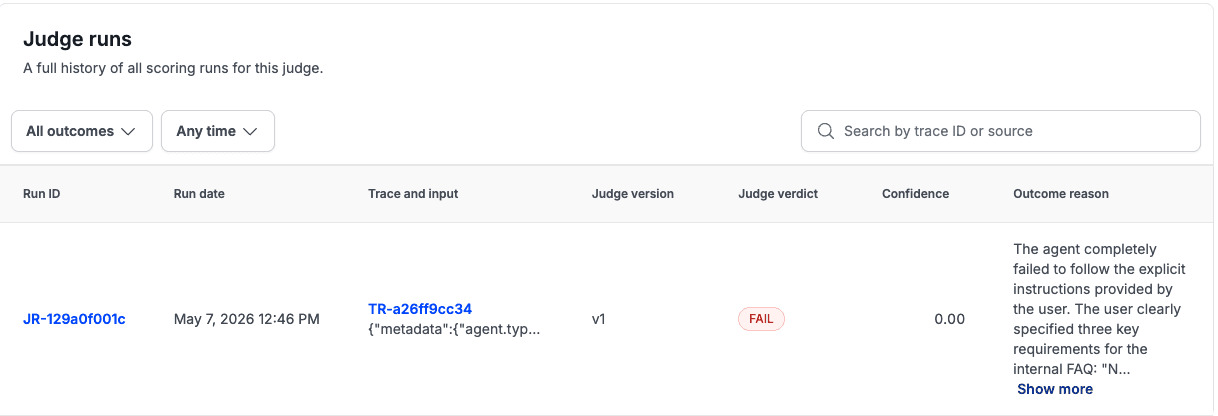

4. Online Evaluation Rules engine

Production monitoring is not a sampling loop. The question is not "what percentage of our outputs are we evaluating?" The question is "when this specific condition is true, fire this specific action."

When agent trajectory confidence drops below 0.7, alert PagerDuty. When the RAG quality judge returns FAIL on three consecutive traces from the same data source, open a Slack incident. When cost per eval exceeds the per-request budget, circuit-break before the next call.

MLflow has online judge sampling. It does not have a rules engine. The condition-action logic, the threshold hooks, the webhook routing: you build that layer, or your monitoring stays reactive instead of predictive.

Stratix ships an Online Evaluation Rules engine with 8 condition operators, 4 sampling strategies, and threshold-action hooks to Slack, PagerDuty, and arbitrary webhooks.

5. Methodology rigor

When a judge scores an output, how do you know the score means something?

The easy answer is "it correlates with human ratings." The honest answer requires knowing: which human ratings, measured with what inter-rater reliability statistic, at what floor, using which judge model, where the judge model is guaranteed not to be the same family as the system under test.

MLflow does not publish explicit inter-annotator agreement (IAA) floors per modality. The evaluation scores you get are real, but the methodology layer (kappa disclosure, contamination defense, judge-family independence) is on you to implement and document.

Stratix publishes per-modality kappa floors: Text 0.60, Chat 0.55, Video 0.50, Agent 0.55. Multi-judge independence is enforced: the judge model cannot be the same family as the model being evaluated. The perturbed-benchmark protocol (paired bootstrap or McNemar) is part of every evaluation run. This matters when a compliance reviewer or a customer audit asks you to defend the number.

6. Resiliency primitives

AI API calls are expensive and unreliable. The call fails. The model returns a 429. The judge call takes 30 seconds and your timeout fires. The trace log is partial.

MLflow assumes your infra is stable. It does not ship per-key spend caps, runaway-loop detection, circuit-breaker primitives, adaptive throttling on 429 responses, or poison-pill handling. Some teams never need these. Teams running multi-step agents in production, where one bad loop can exhaust an API budget before anyone notices, need them.

Stratix ships all of these as production defaults: per-key spend caps, runaway-loop detection, circuit-breaker primitives, adaptive throttling off 429 body hints, idempotency-key support, graceful degradation to a cheaper model on 503, poison-pill handling, and silent drift detection via an output-distribution monitor.

7. Identity and access at enterprise tier

MLflow's OSS tier does not ship SAML or OIDC. Those are Databricks-managed MLflow features, gated by Databricks tier pricing. If you want scoped API keys, per-key audit logs, or key rotation with grace on the OSS tier, you are adding that yourself.

Stratix ships SAML/OIDC at the base tier, scoped API keys (traces:read, feedback:write, etc.), API-key rotation with grace, per-key audit log, and per-key spend cap. These are not enterprise add-ons.8. Compliance posture

SOC 2, GDPR, HIPAA, EU AI Act, ISO 42001, SR 11-7. These are not features. They are table stakes for selling to regulated industries, and they are not something you add to an OSS library after the fact.

Stratix ships with a compliance dashboard and gap analysis covering all six. The attestation chain and hash-chained audit trail described above are part of this compliance posture, not separate features.

9. Cross-tenant safety evaluation

When one tenant's data or model behavior could affect another tenant's evaluation context (through shared judge resources, shared ranking pools, or shared cache): a cross-tenant safety evaluator is needed that flags contamination before it propagates.

MLflow, as a single-tenant tool by design, does not have this concept. Stratix has a cross-tenant safety evaluator that runs on security-incident-on-fail logic: if cross-tenant contamination is detected, the run fails hard and alerts the operator before any result is surfaced.

10. A named support model

Open-source support is the community, Stack Overflow, and your own engineers. That works for dev tools. It does not work when an AI system fails in production at 2 AM on a Saturday with a customer escalation pending.

Stratix ships with a named Customer Engineer, 24/7 incident response, quarterly executive business reviews with roadmap-input rights, and a 99.95%+ contractual SLA on a status page with a change-management notice cadence. That is the customer engineering model the library tier never includes.

The 10-criterion scorecard

The table below scores both tools against the ten production primitives. Weights reflect the relative cost of building each primitive yourself if you choose the library path. Score is 0-10 per criterion; higher is better.

Criterion | Weight | MLflow | Stratix |

|---|---|---|---|

Multi-tenant primitives | 20% | 1 | 10 |

Audit chain + attestation | 15% | 2 | 10 |

Replay engine | 15% | 1 | 9 |

Online Evaluation Rules engine | 15% | 3 | 9 |

Methodology rigor | 10% | 3 | 10 |

Resiliency primitives | 10% | 2 | 9 |

Identity and access | 5% | 4 | 9 |

Compliance posture | 5% | 2 | 10 |

Cross-tenant safety | 3% | 0 | 9 |

Support model and SLA | 2% | 2 | 9 |

Weighted total | 100% | 2.0 / 10 | 9.7 / 10 |

MLflow's score is not low because MLflow is bad. It is low because the scorecard is asking the wrong question for MLflow. MLflow scores a 10 on the dimension the table does not include: "excellent open-source eval library." That is what it was built to be.

The comparison exists because teams regularly find themselves at the moment of decision (library or platform) without a clear picture of what they are signing up for in the library path. This is an attempt to make that picture clearer.

What the work-shift actually looks like

The engineering cost of building these ten primitives on top of an OSS library is not theoretical. Teams who have done it describe the arc the same way: the initial eval library integration takes a few days. The first production incident that exposes missing infrastructure takes three to six months to address. The compliance audit that asks for cryptographic attestation takes another quarter.

None of that engineering produces a better model. None of it ships a better product. It maintains infrastructure that exists because the team chose a library instead of contracting the platform.

The question is not whether Stratix is better than MLflow as a library. The question is whether the organization wants to be in the business of building and maintaining evaluation infrastructure, or in the business of the thing the evaluation infrastructure is supposed to improve.

The Stratix Python SDK

For teams running production AI who want to run this evaluation infrastructure without building it:

Full documentation: stratix.layerlens.ai

GitHub: github.com/layerlens/stratix-python

Key Takeaways

MLflow excels as an eval library. The gap is in the ten production primitives teams build around it.

Multi-tenancy (20% weight) is the single highest-cost primitive to build yourself and has no MLflow roadmap commitment.

Audit attestation and replay (15% each) are the next two highest-cost gaps, both critical for regulated industries.

The real question is organizational: does your team want to build and maintain eval infrastructure, or ship product?

Stratix scores 9.7/10 on production readiness because these primitives ship as defaults, not add-ons.

Frequently Asked Questions

Is MLflow bad for AI evaluation?

No. MLflow is an excellent open-source eval library. The gap is in the production infrastructure primitives (multi-tenancy, audit attestation, replay, rules engine) that teams end up building on top of it.

What is the difference between an eval library and an eval platform?

A library gives you functions to call (run an eval, log a trace, score an output). A platform gives you the services that surround those functions at production scale: multi-tenancy, compliance, replay, monitoring rules, and support.

Does MLflow support multi-tenancy?

Not natively. GitHub issue #5844 has been open since 2022 with no maintainer commitment. The current workaround is running separate MLflow server instances per tenant.

How does Stratix handle compliance requirements like SOC 2 and EU AI Act?

Stratix ships a compliance dashboard covering SOC 2, GDPR, HIPAA, EU AI Act, ISO 42001, and SR 11-7. The hash-chained audit trail provides cryptographic attestation for every evaluation record.

What does the 10-criterion scorecard measure?

It measures production readiness across ten infrastructure primitives. It does not measure library quality, where MLflow scores highly.

Can I use MLflow and Stratix together?

Yes. Teams that already have MLflow experiments can integrate Stratix for the production layer while keeping MLflow for local experimentation and tracking.

Methodology

The 10-criterion scorecard was developed from interviews with engineering teams who have deployed AI evaluation systems in production, mapped against the feature documentation of both platforms as of May 2026. Weights reflect the relative engineering cost (person-months) of building each primitive from scratch. Scores reflect native capability (10 = ships as a production default, 0 = no concept of the feature).

Full evaluation data is available on Stratix.

The MLflow multi-tenancy issue referenced in this article is GitHub issue #5844. The issue has been open since 2022. As of the publication date of this article, it has no maintainer resolution.